Automating Algorithm Discovery: A Case Study in Congestion Control Optimization

This post is part of our AI-Driven Research for Systems (ADRS) case study series, where we use AI to automatically discover better algorithms for real-world systems problems.

In this post, we apply ADRS frameworks to improve congestion control algorithms (CCAs) in datacenter networking. The goal is to reduce the queue length in data center networks and thus reduce latency. We explore how evolutionary frameworks, such as OpenEvolve, can improve congestion control algorithms. We use NSDI '22 PowerTCP's 10:1 incast benchmark to evaluate congestion algorithms. The evolved algorithm reduces queue length by 49%.

- 🕸️ ADRS Blog Series: https://ucbskyadrs.github.io/

- 📝 Paper: https://arxiv.org/abs/2510.06189

- 👩💻 Code: github.com/UCB-ADRS/ADRS

- 💬 Join us: join.slack.com/t and Discord

- Follow us: x.com/ai4research_ucb

The Problem: Datacenter Networking

In this blog post, we apply ADRS frameworks to improve SOTA congestion control algorithms (CCAs) in datacenter networking. Since the late 1980s, congestion control algorithms (CCAs) have played a central role in maintaining network stability and fairness, evolving from early loss-based mechanisms designed for wide-area networks to more sophisticated approaches that respond to delay, bandwidth estimation, and explicit signals.

In recent years, the rapid expansion of cloud computing and large-scale distributed services has fundamentally expanded the operating environment for congestion control algorithms. Modern datacenter networks (DCNs) are characterized by high bandwidth, low latencies, and highly synchronized traffic patterns. These conditions expose limitations in traditional CCAs and place increasingly stringent demands on both throughput and tail latency. As a result, congestion control has re-emerged as a critical area of innovation, requiring algorithms that can detect congestion quickly, react accurately, and operate efficiently at scale.

The NSDI '22 paper PowerTCP tackles this problem by introducing a power-based congestion control algorithm, which achieves much more fine-grained congestion control by adapting to the bandwidth-window product(power). We use this approach as baseline and use OpenEvolve to improve upon it.

Previous Approaches

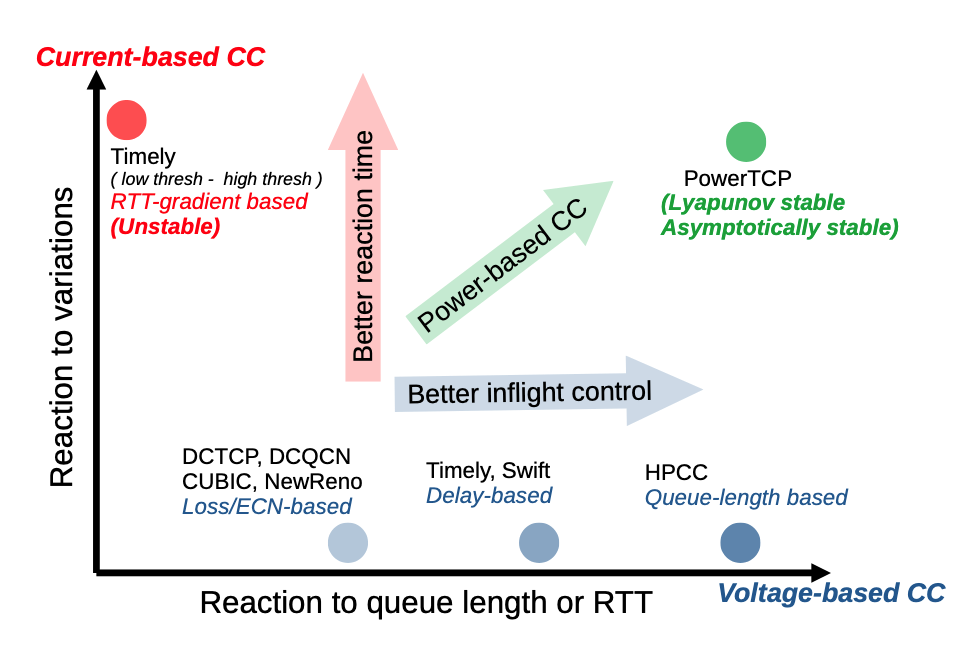

Traditionally, there are two main categories of congestion control algorithms. The first category (voltage-based) consists of algorithms that react to queue length or RTT-based algorithms, such as CUBIC, DCTCP, or Vegas. The second category (current-based) contains algorithms that react to variations, such as the change in RTT (e.g., the TIMELY algorithm).

PowerTCP takes into consideration both "voltage" and "current" (shown in Figure 1) for control action using measurements taken within the network and propagated through in-band network telemetry (INT). This allows the algorithm to maintain low queue length and resolve congestion rapidly. Since in-band network telemetry is not supported by a lot of legacy switches widely used in data centers, the paper also proposes θ-PowerTCP through accurate RTT measurement capabilities at the end-host that allow for deployment when INT is not supported by switches in the datacenter. In this case study, we use the existing SOTA, θ-PowerTCP, as the baseline algorithm and starting point for our evolution.

Evaluation of Baselines

We first discuss the baselines, benchmarks and SOTAs for evaluation. Unfortunately, there is no single unified benchmark for networking or datacenter networking. This is due to the large workload diversity of networking applications, even within datacenter networking. Different workloads can have entirely different characteristics, so generally, researchers design their evaluations around reasonable assumptions about targeted type of network and then create test scenarios to test these properties.

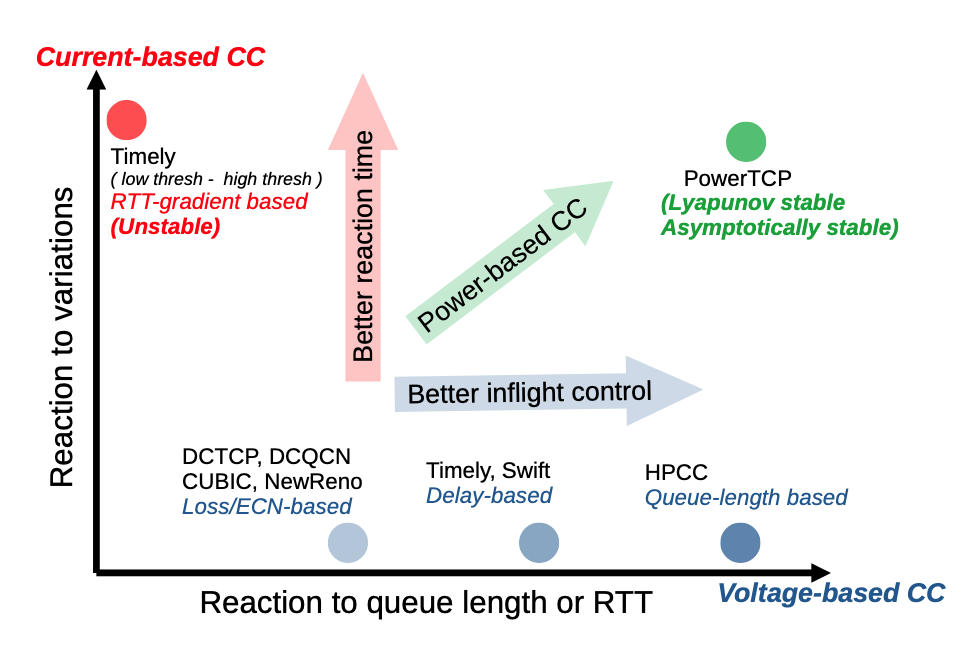

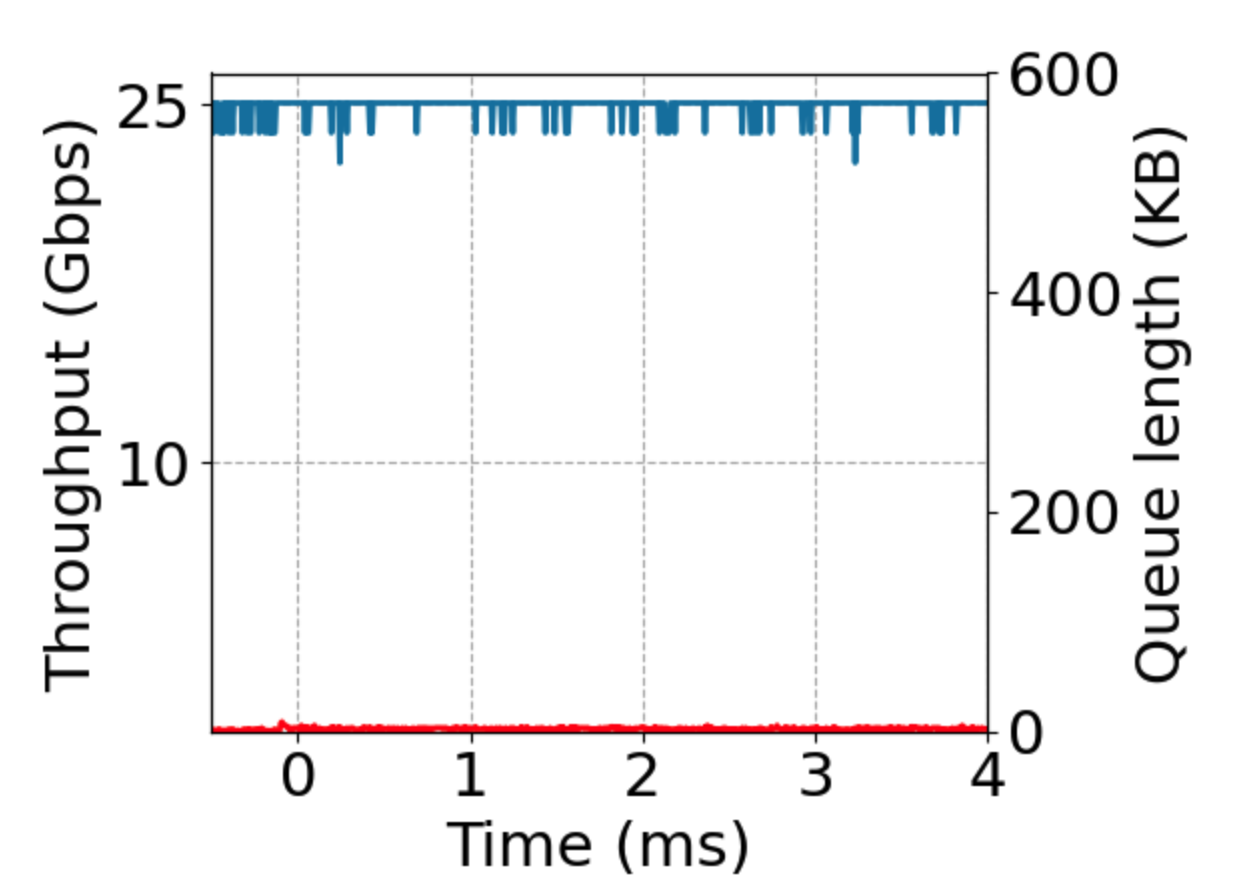

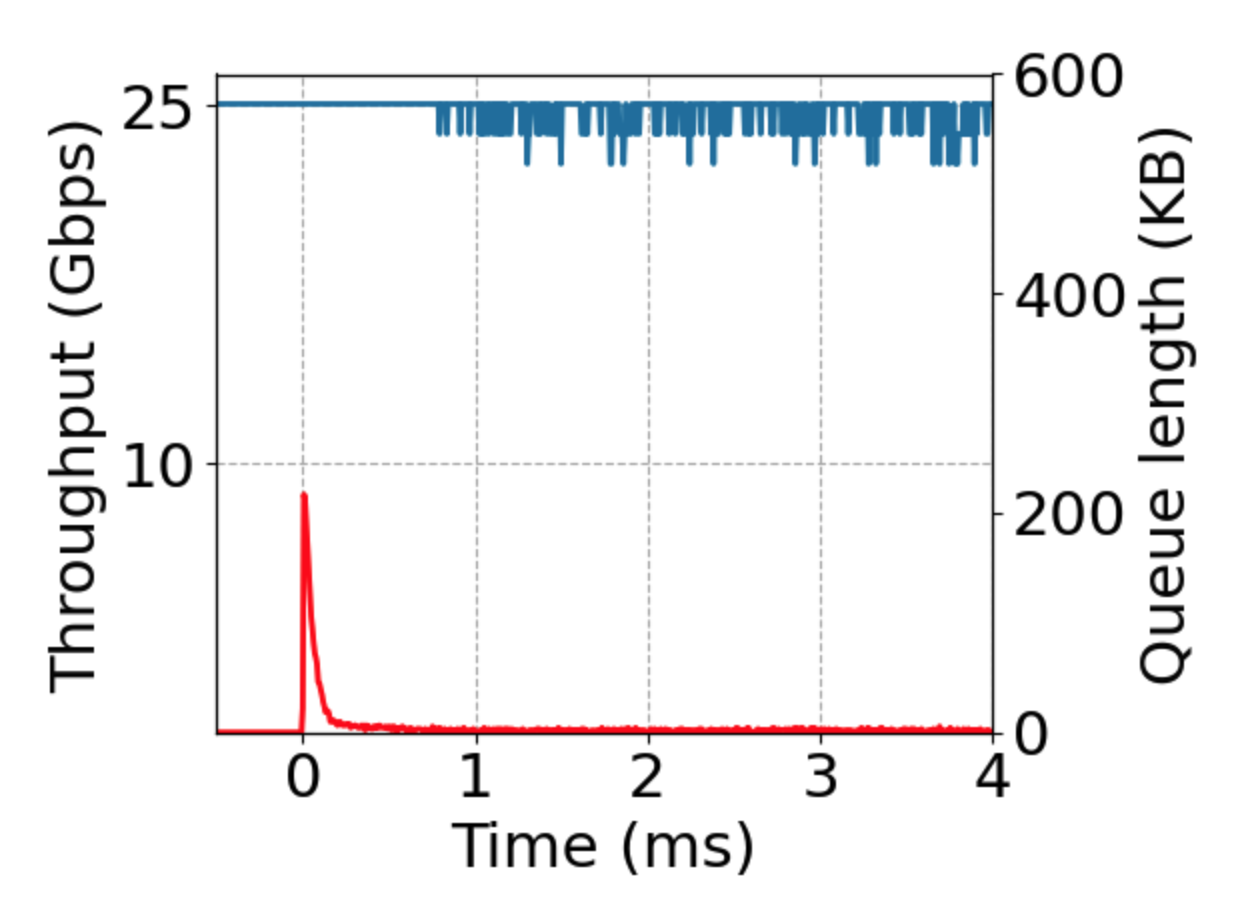

For the purposes of improving CCA algorithms, we use the incast benchmark in the PowerTCP paper. The benchmark is built with the deterministic Network Simulator 3 (NS3). Specifically, the benchmark focuses on a deployment scenario in the context of Remote Direct Memory Access (RDMA) networks, where the CC algorithm is implemented on a Network Interface Card (NIC). At time t = 0, we launch ten flows simultaneously towards the receiver of a long flow leading to a 10:1 incast. PowerTCP and θ-PowerTCP are SOTA algorithms, and in this benchmark, they quickly mitigate the incast and reach near zero queue lengths without losing throughput, while other algorithms such as TIMELY, HPCC and HOMA suffer (see Figure 2).

Improving θ-PowerTCP with OpenEvolve

To test whether an ADRS can discover a better-performing solution, we apply OpenEvolve to this problem, using the θ-PowerTCP mentioned above as the starting program. We evolve the policy over 100 iterations, which takes ~1.5 hours, using Gemini-3-Pro.

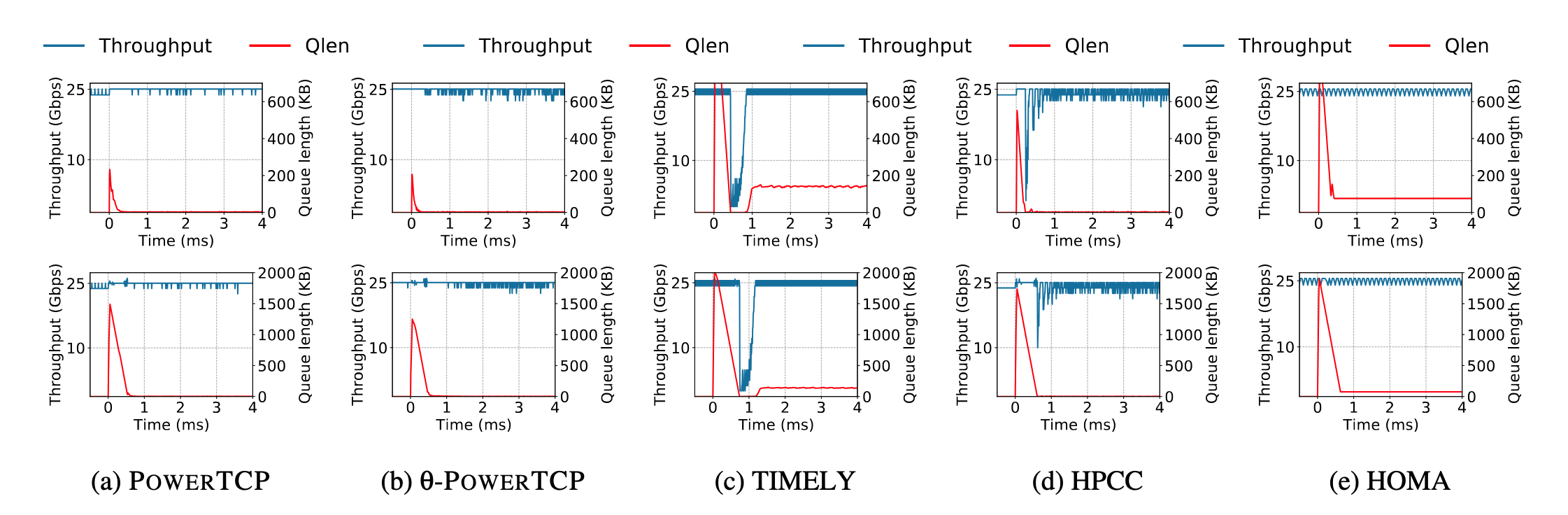

Combined Score

Since the two metrics we care about are throughput and queue length, we calculate the combined score with the following formula:

combined_score = e^(avg_throughput - 20) - avg_queue_len / 100

In this benchmark, the goal is to minimize queue length while keeping throughput high. Since the throughput is in Gbps with a maximum of 25, and queue length is in bytes, we divide the queue length by 100 to normalize the two units. To further penalize the reduction of throughput, we add an exponential term to the throughput.

The Evolution Process

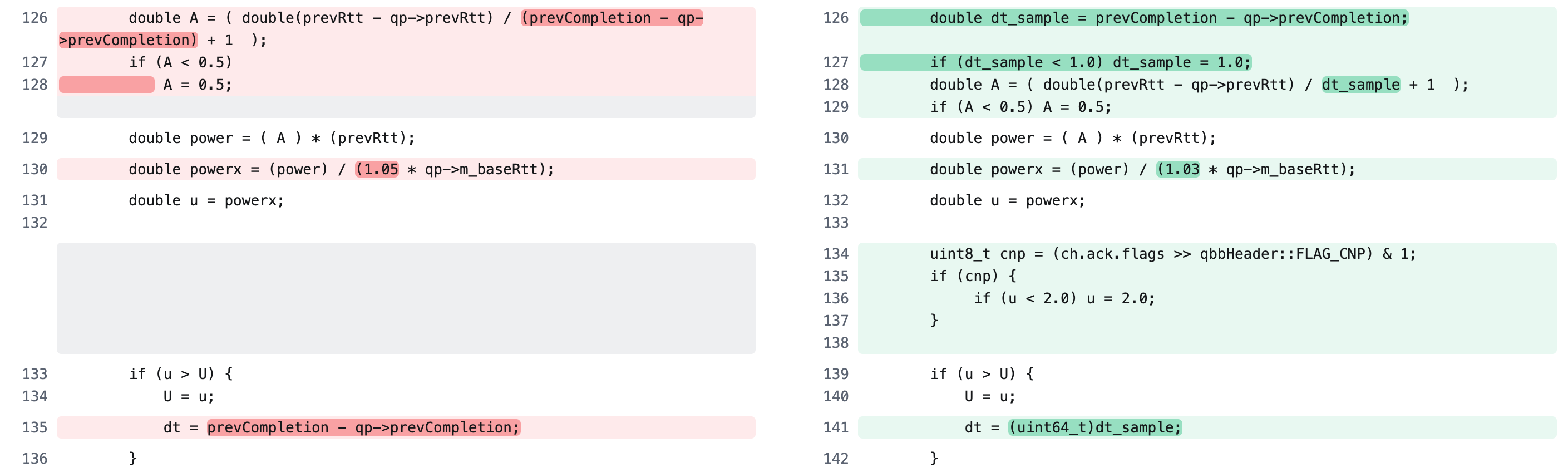

In the best program highlighted in Figure 3, the combined score was able to improve to 125.64 from initial program's 68.97. Specifically, the average queue length reduced by 49% from 4769.38 to 2412.49, while throughput remained roughly the same. The evaluation result is shown as follows in Figure 4a and 4b:

Evolution Results

The resulting algorithm tuned a few parameters as shown here. Specifically, OpenEvolve's CCA lowers initial transmission rates and makes the algorithm more sensitive and responsive to changes. This allows the algorithm to avoid congestion and respond to rate changes quickly to utilize the bandwidth.

What about Side Effects?

While OpenEvolve's solution improved on the NS3 10:1 incast benchmark, we want to learn about the side effects of the changes made by OpenEvolve. Are other aspects of the algorithm compromised to improve evaluation scores? To evaluate OpenEvolve's solution in more detail, we benchmark OpenEvolve's CCA on two additional benchmarks presented in PowerTCP paper.

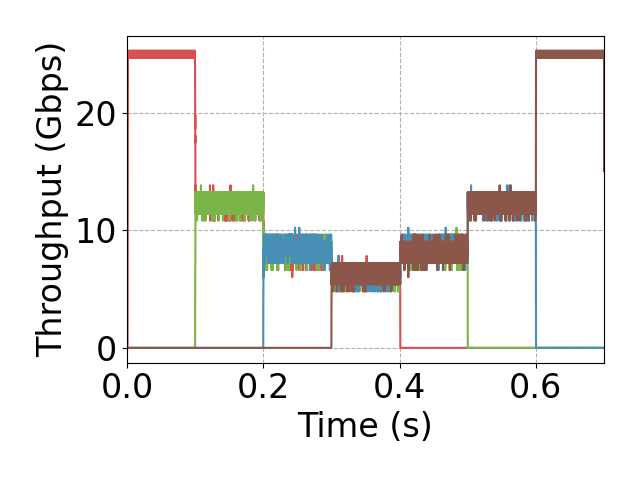

Fairness

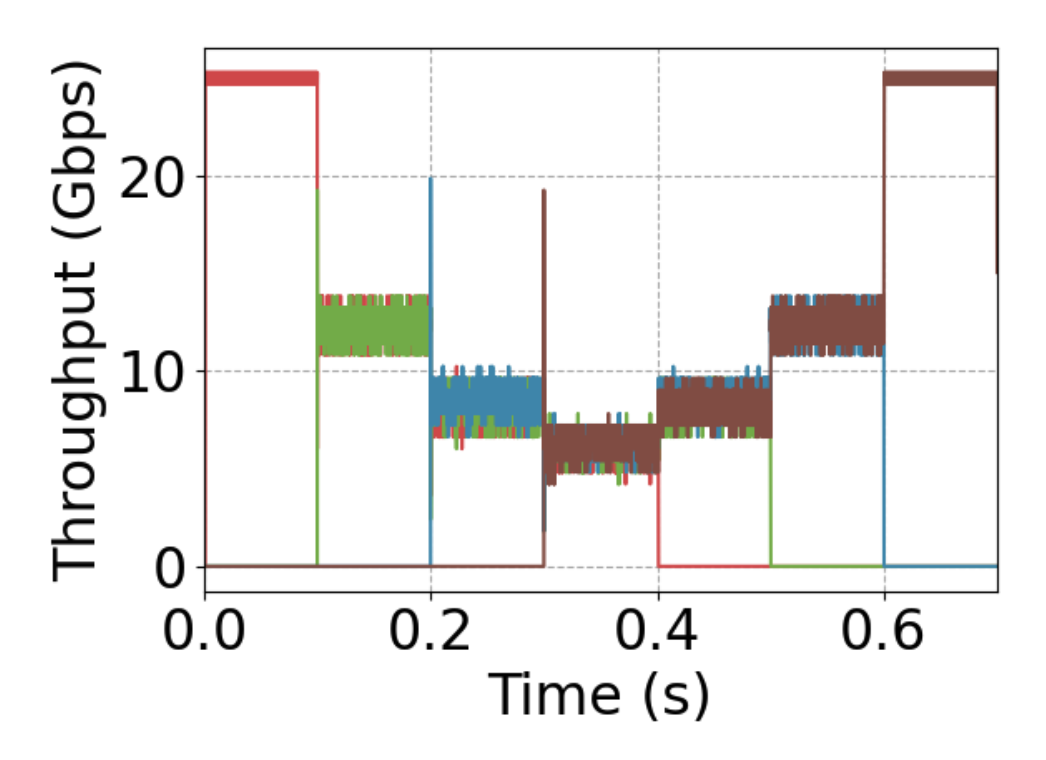

In congestion control algorithms, fairness refers to how a network's total available bandwidth is distributed among competing flows. The following graphs show how bandwidth is shared by multiple flows as they arrive and leave. A thinner line means throughputs are allocated more evenly, and the CCA is more fair and stable.

The results show that OpenEvolve's CCA achieves better fairness compared to the initial θ-PowerTCP program, with more stable bandwidth allocation and less overcorrection.

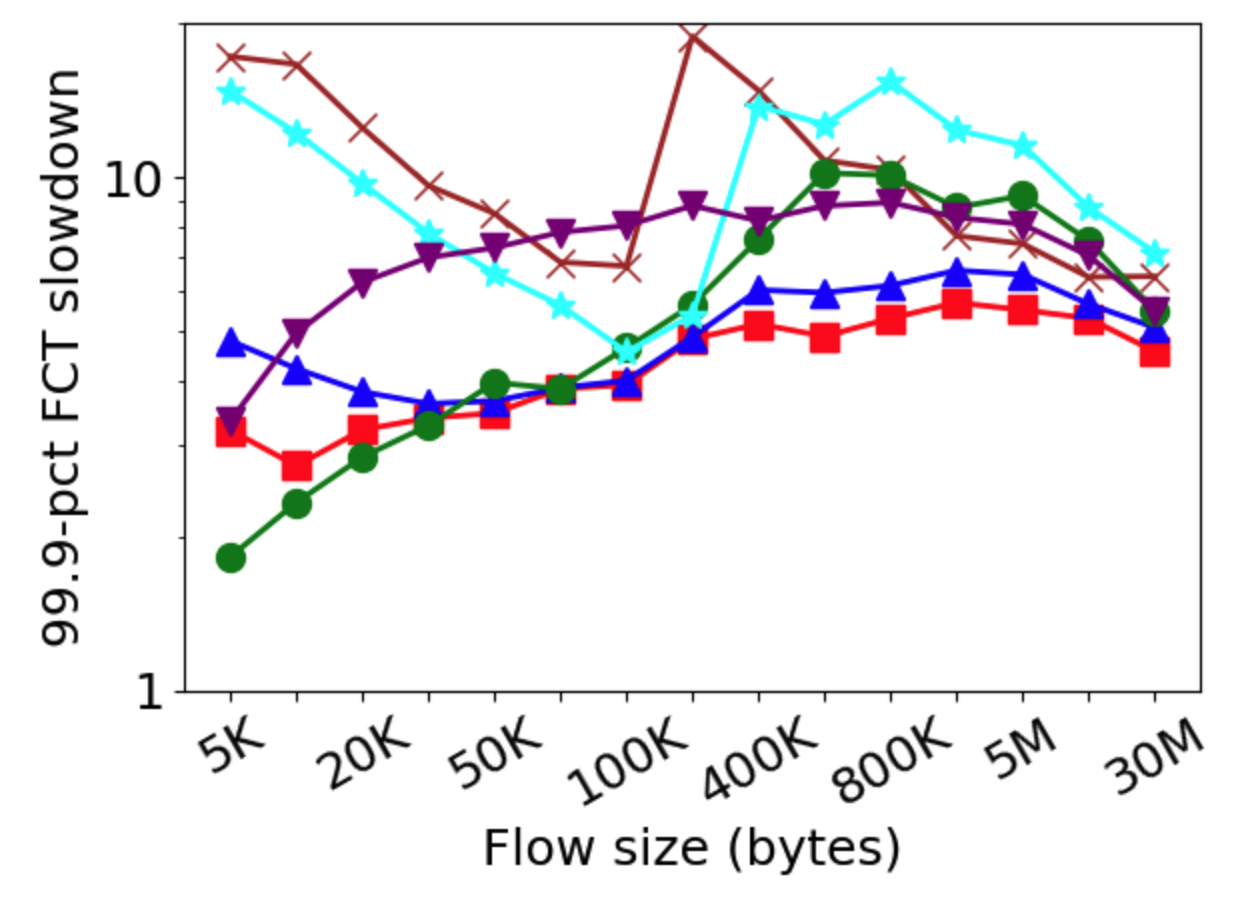

Web Search Workload

We additionally benchmark OpenEvolve's solution on a web search workload and measure 99.9th percentile flow completion times. DatacenterTCP is the algorithm generated by OpenEvolve (see purple line in Figure 7), and it shows a slowdown with smaller flow sizes, while outperforming θ-PowerTCP(Green line in Figure 7) on larger flows. We think this is due to OpenEvolve favoring algorithms that perform well in the 10:1 incast large flow benchmark and putting less emphasis on performance of shorter flows. This leads to an algorithm that works especially well in large flows but performs worse in small flows.

OpenEvolve as a General-Purpose Optimizer

In this blog post, we showcase how we apply ADRS to automatically improve congestion control algorithms. ADRS is able to improve the existing algorithm and reduce the queue length by 49% in a 10:1 incast benchmark over existing state-of-the-art algorithms from PowerTCP in NSDI '22.

This case highlights the opportunity of utilizing OpenEvolve and other ADRS frameworks as general-purpose optimizers. Given any system, we can use ADRS to automatically optimize the system for a specific scenario at low cost. This shows the potential to optimize bespoke systems and solutions for specific use cases.

Contribute to the ADRS Blog Series!

The AI-Driven Research Systems (ADRS) initiative is an open, collaborative effort to explore how AI can accelerate scientific discovery itself, from evolving algorithms to optimizing real-world systems.

If you've built, optimized, or experimented with AI-driven research tools, we'd love to hear from you. Share your experiences, insights, or case studies with us in the ADRS Blog Series.

👉 Reach out to us via email: ucbskyadrs@gmail.com

💬 Join us: join.slack.com/t and Discord

🗞️ Follow us: x.com/ai4research_ucb