Blog

Insights and case studies on AI-Driven Research for Systems.

18 total posts.

2026

9 posts

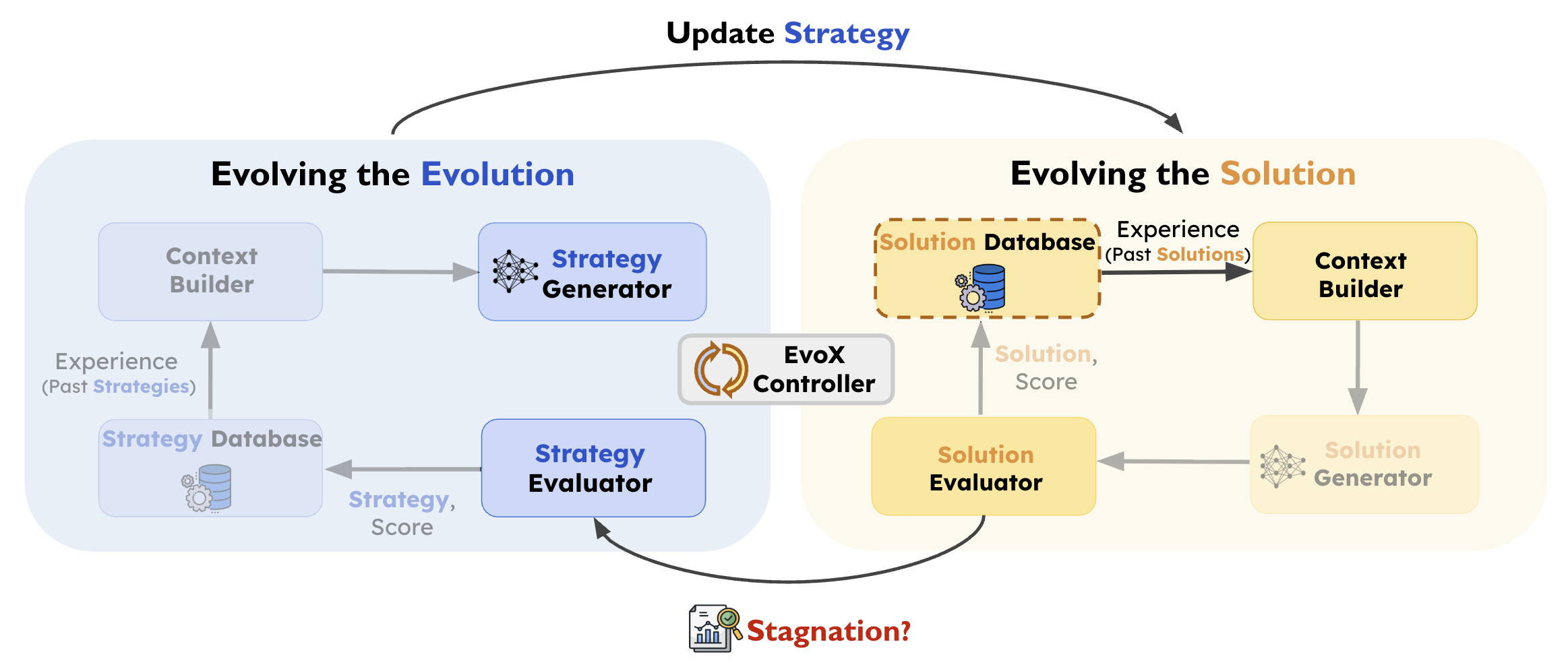

EvoX: Letting AI Evolve Its Own Evolution Process

EvoX introduces meta-evolution for LLM-driven optimization: a two-level evolutionary process that evolves both candidate solutions and the strategy guiding their generation. Outperforms AlphaEvolve, OpenEvolve, GEPA, and ShinkaEvolve across ~200 diverse optimization tasks — including ADRS systems benchmarks.

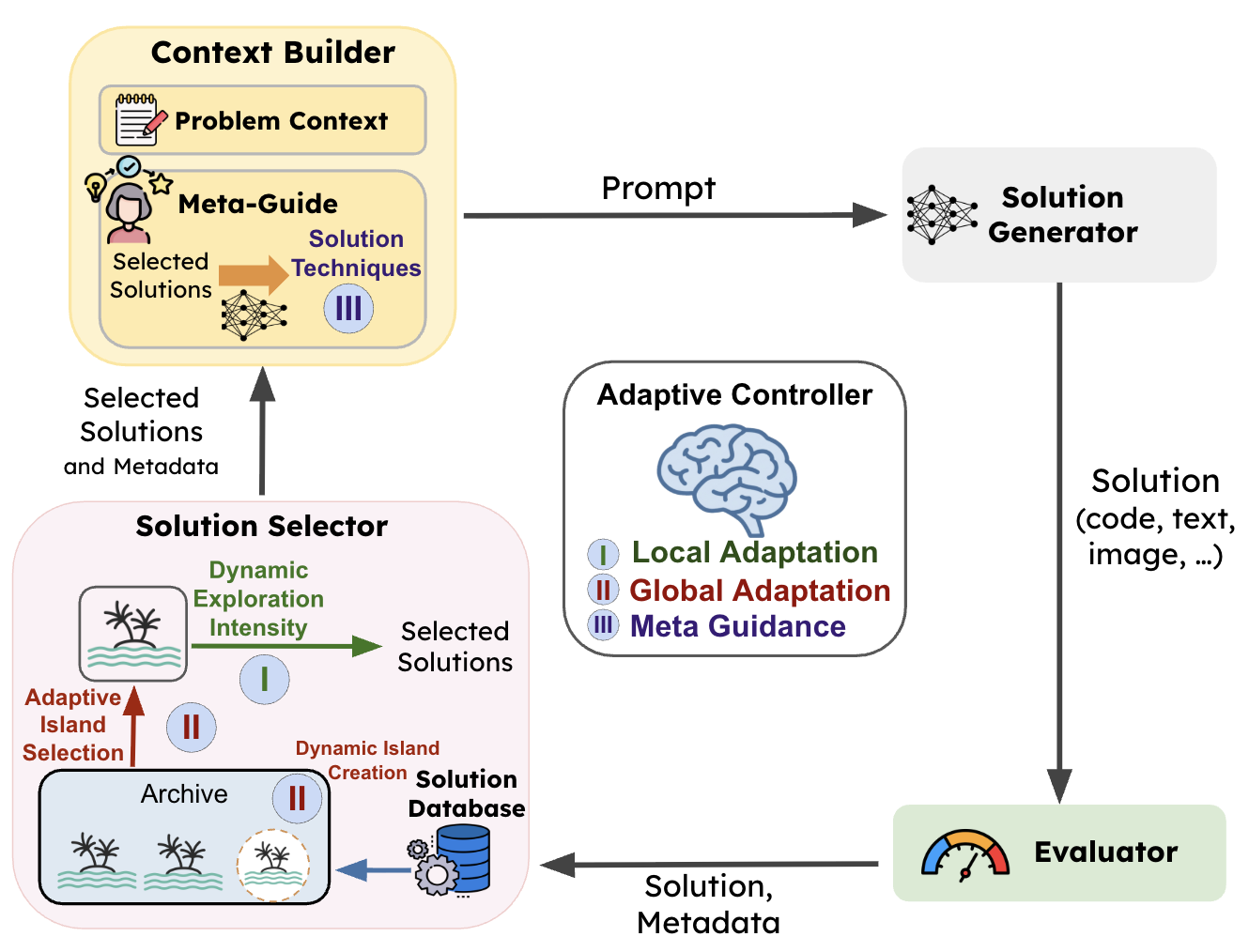

AdaEvolve: Adaptive LLM-Driven Zeroth-Order Optimization

AdaEvolve is a hierarchical adaptive algorithm for LLM-driven evolutionary search that treats fitness improvement trajectories as a gradient analogue. A single accumulated improvement signal drives three synchronized adaptation levels, achieving SOTA across 185 diverse optimization tasks — including ADRS systems benchmarks.

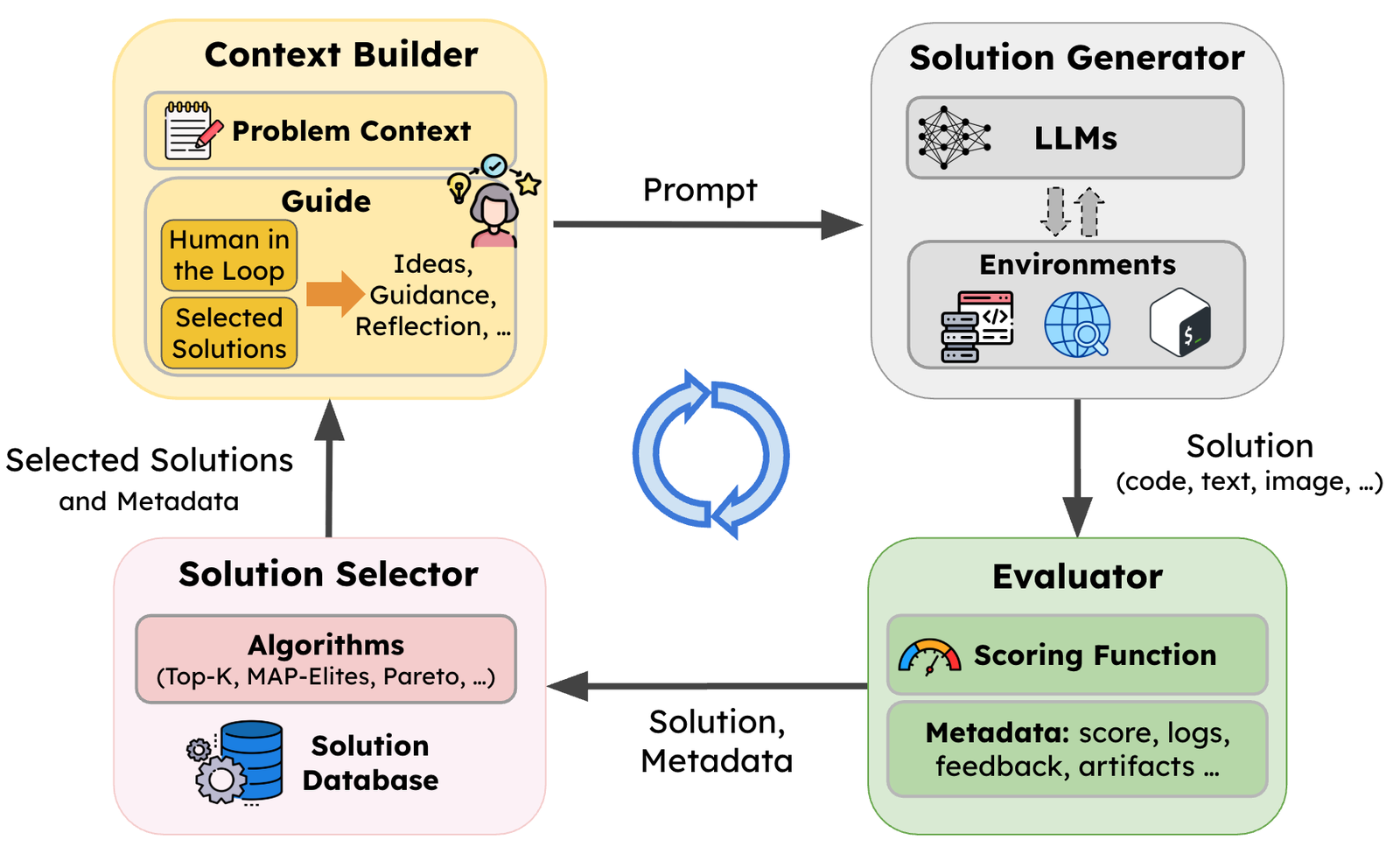

SkyDiscover: A Flexible Framework for AI-Driven Scientific and Algorithmic Discovery

SkyDiscover is a modular, open-source framework for LLM-driven evolutionary search — achieving new state-of-the-art on Frontier-CS, ADRS systems benchmarks, and 200+ optimization tasks spanning competitive programming, circle packing, MoE load balancing, and GPU model placement.

Automating Algorithm Discovery: A Case Study in Improving Multi-Agent Reasoning Systems using MAST (Part 2)

In our previous work ("Automating Algorithm Discovery with MAST"), we showed that the Multi-Agent Systems Failure Taxonomy (MAST) could transform the traditionally manual process of agent debugging into a tractable search problem. By using fine-gr...

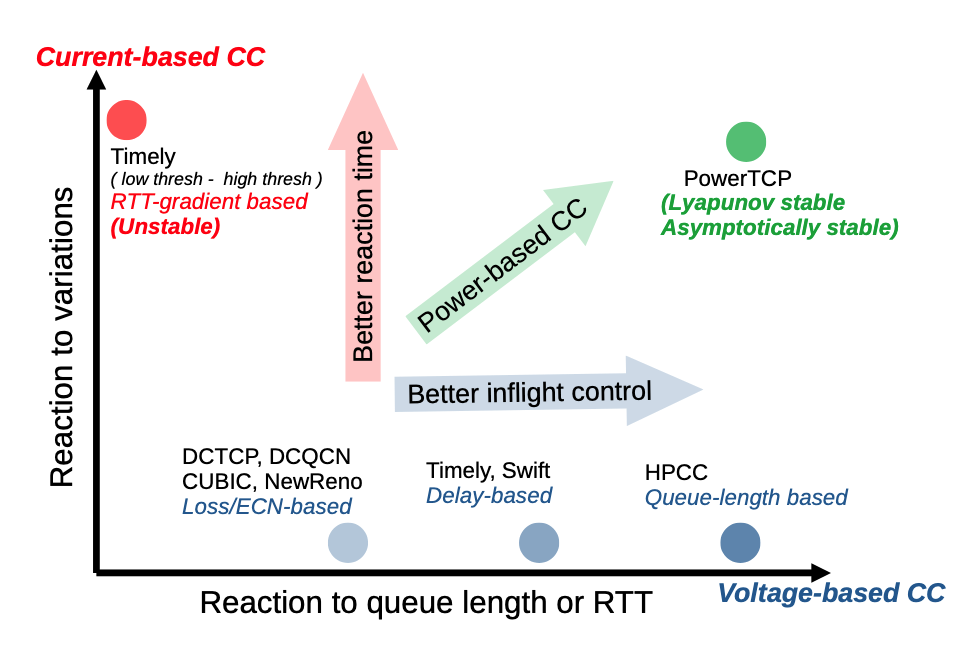

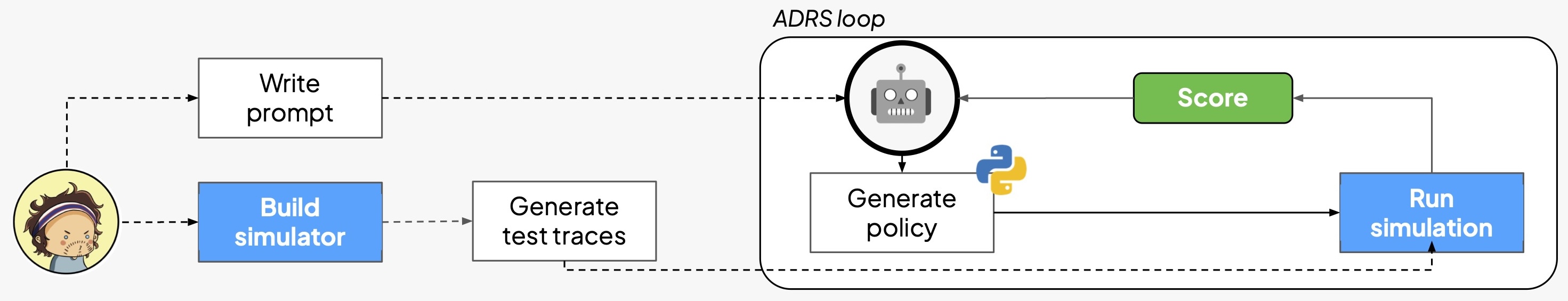

Automating Algorithm Discovery: A Case Study in Congestion Control Optimization

In this blog post, we apply ADRS frameworks to improve state-of-the-art congestion control algorithms in datacenter networking. In just 1.5 hours of autonomous evolution, the AI evolved the SOTA baseline from PowerTCP into a more responsive algorithm, reducing average queue length by 49% while maintaining high throughput.

Automating Algorithm Discovery: A Case Study on Sparse Attention Design using SkyLight

This post is the twelfth in our ADRS series. We study sparse attention for accelerating decoding in Large Language Models (LLMs). We explore how AI, specifically a Cursor agent within the SkyLight framework, can evolve towards state-of-the-art solutions like vAttention, transitioning from basic windowed attention to a complex, hardware-aware strategy that significantly accelerates the decoding phase.

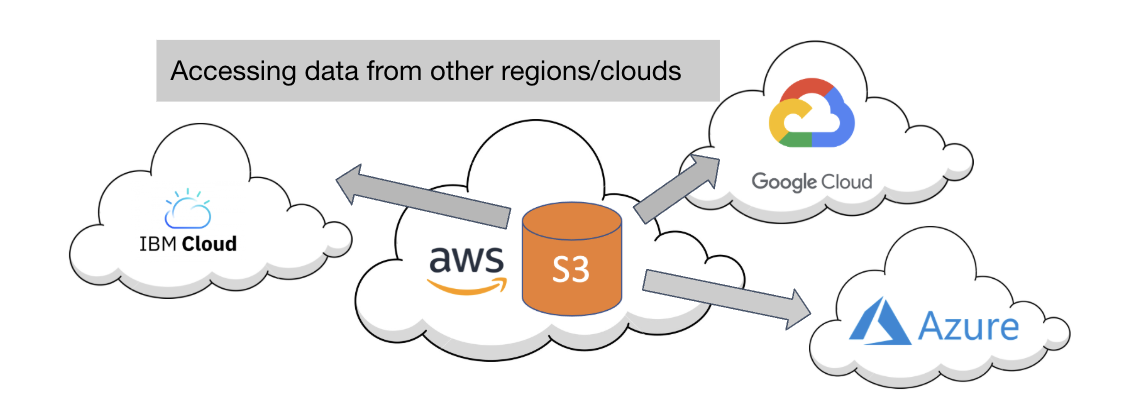

Automating Algorithm Discovery: A Case Study in Multi-Cloud Data Transfer

This post is the eleventh in our ADRS series. We tackle the challenge of efficiently accessing data across multiple cloud providers and regions, previously addressed by Cloudcast. By leveraging ADRS to evolve scheduling policies, we discover novel algorithms that minimize latency and cost when accessing data from different clouds.

Automating Algorithm Discovery in the Lakehouse: Leveraging ADRS to Improve Bauplan

This post is the tenth in our ADRS series, where we use AI to automatically discover better algorithms for real-world systems problems. In this blog, we partner with Bauplan to explore how ADRS can optimize policy generation for data pipeline systems. By combining simulation-driven evaluation with an evolutionary search loop, we demonstrate how AI can iteratively refine scheduling policies and configuration parameters, achieving significant performance improvements over hand-tuned baselines.

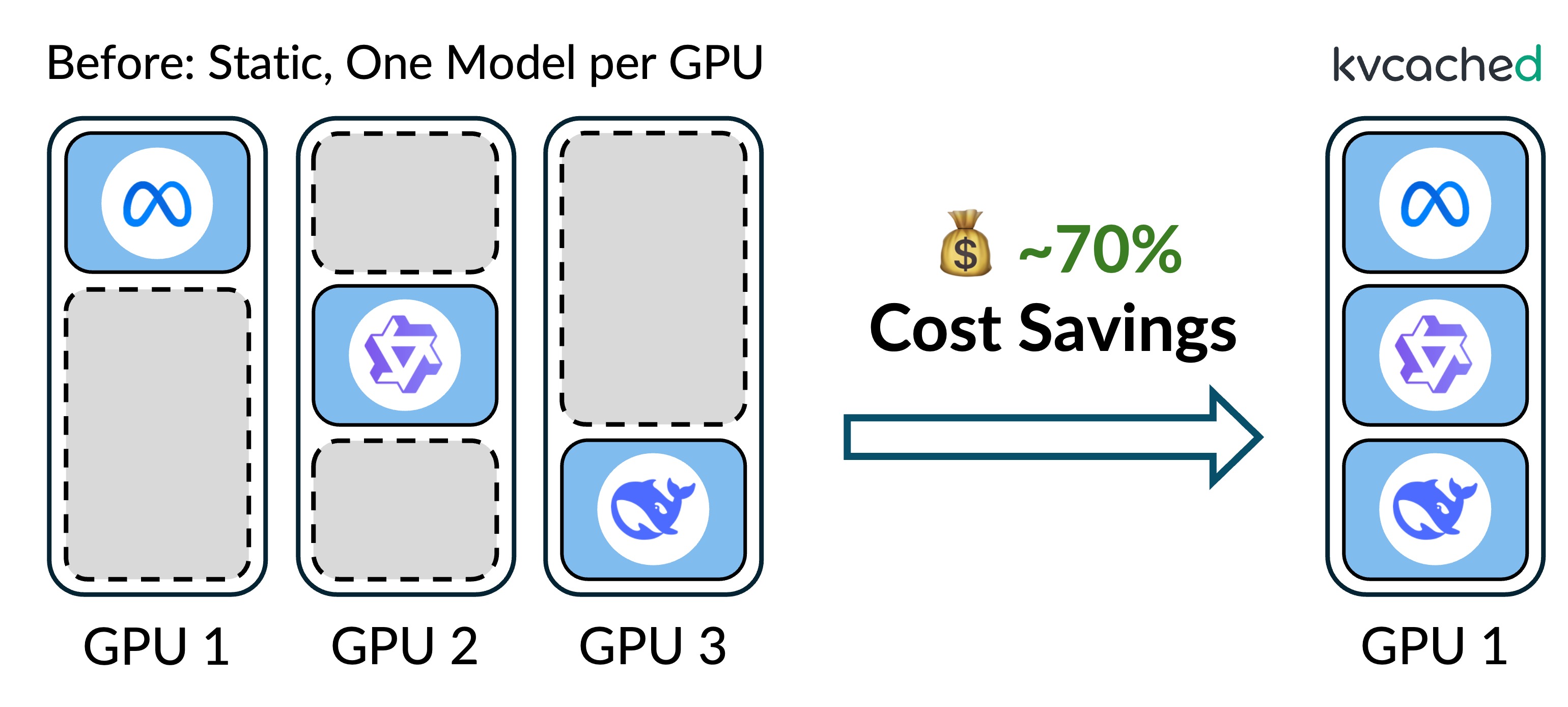

Automating Algorithm Discovery: A Case Study in Scheduler Design for Multi-LLM Serving Systems

This post is the ninth in our ADRS series, where we use AI to automatically discover better algorithms for real-world systems problems. We explore the challenges of managing GPU memory for LLM inference serving and highlight Prism, a system that optimizes memory management and model placement. By leveraging an exact-caching mechanism and optimized scheduling, Prism achieves ~70% cost savings compared to static assignments.

2025

9 posts

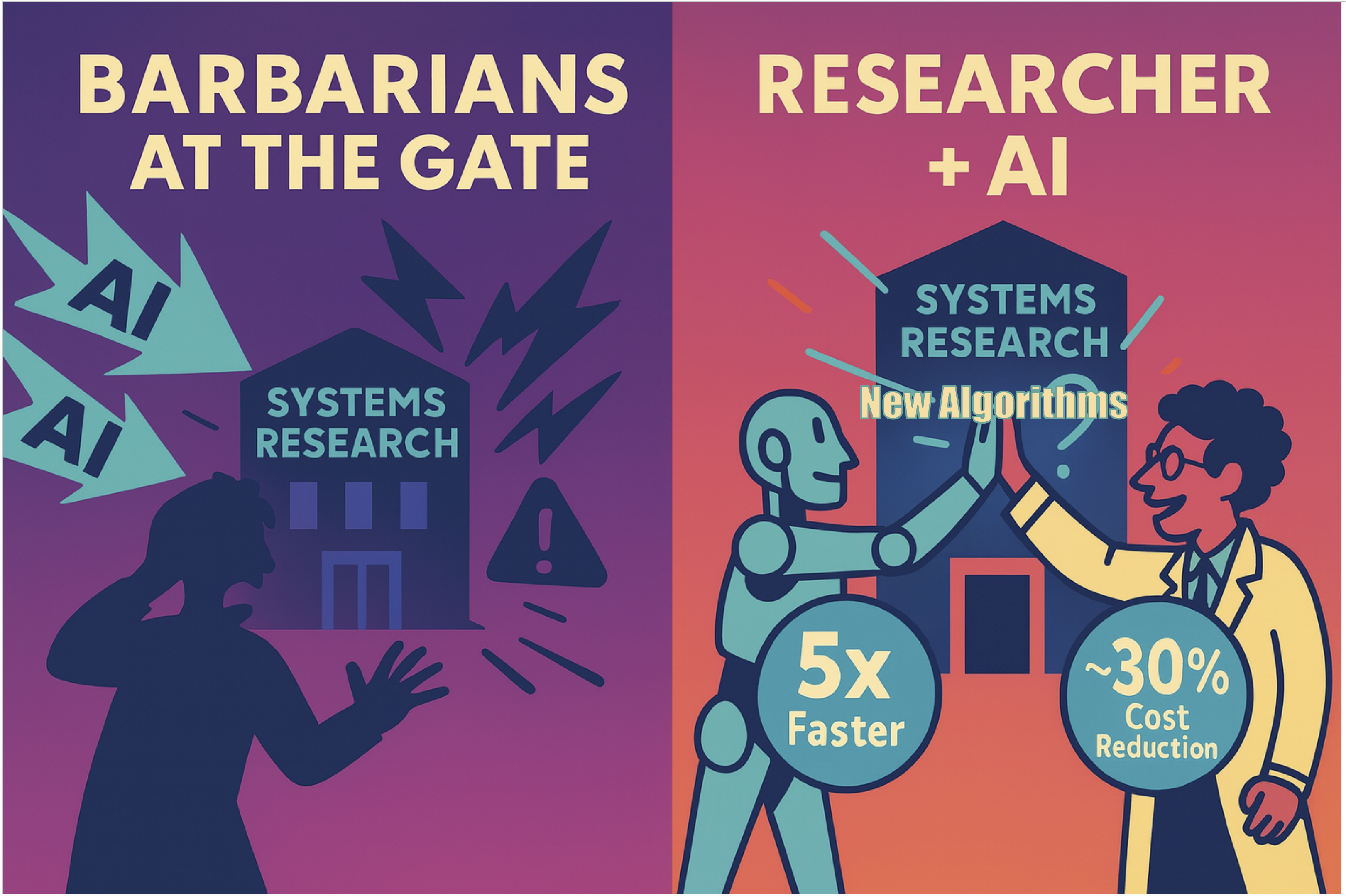

Let the Barbarians In: How AI Can Accelerate Systems Performance Research

This post is the eighth in our ADRS series, which expands upon our work on AI-Driven Research for Systems (ADRS). We evaluate three open-source frameworks across ten real-world research problems, demonstrating their ability to generate solutions that outperform human experts, including a 13x speedup in load balancing and 35% cost savings in cloud scheduling. Based on these findings, we outline best practices for problem specification, evaluation, and feedback, providing a roadmap for applying these tools effectively.

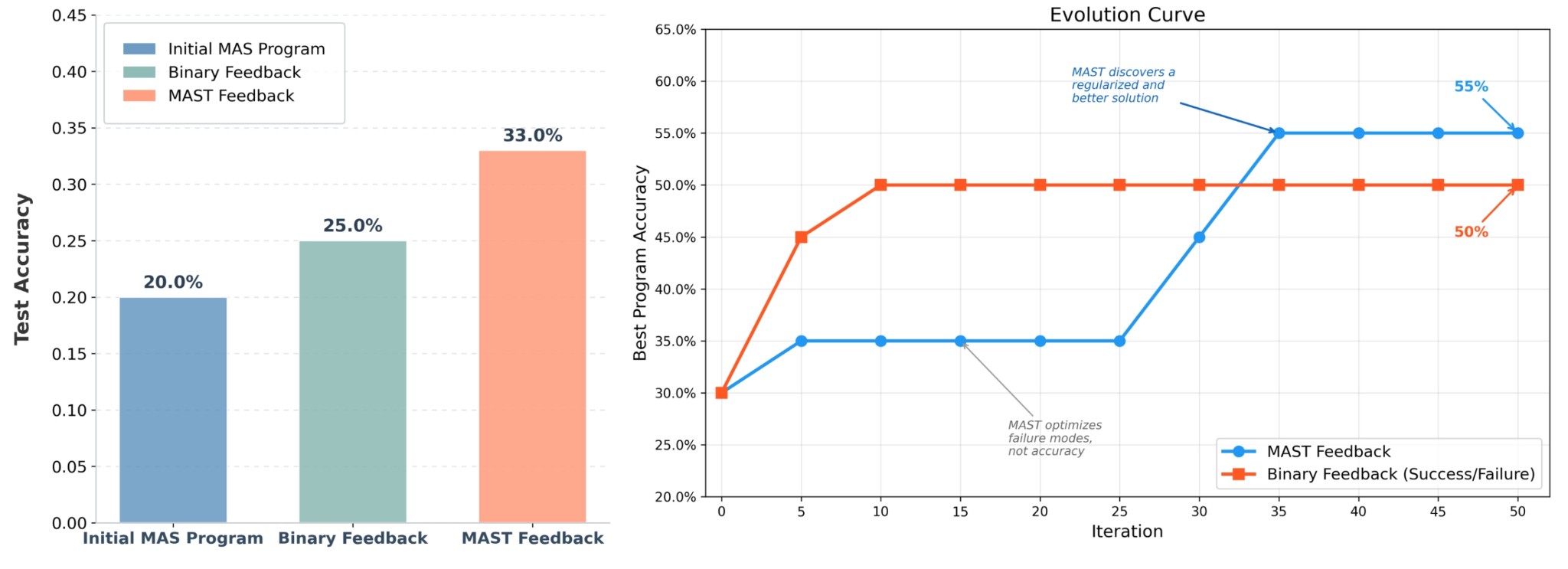

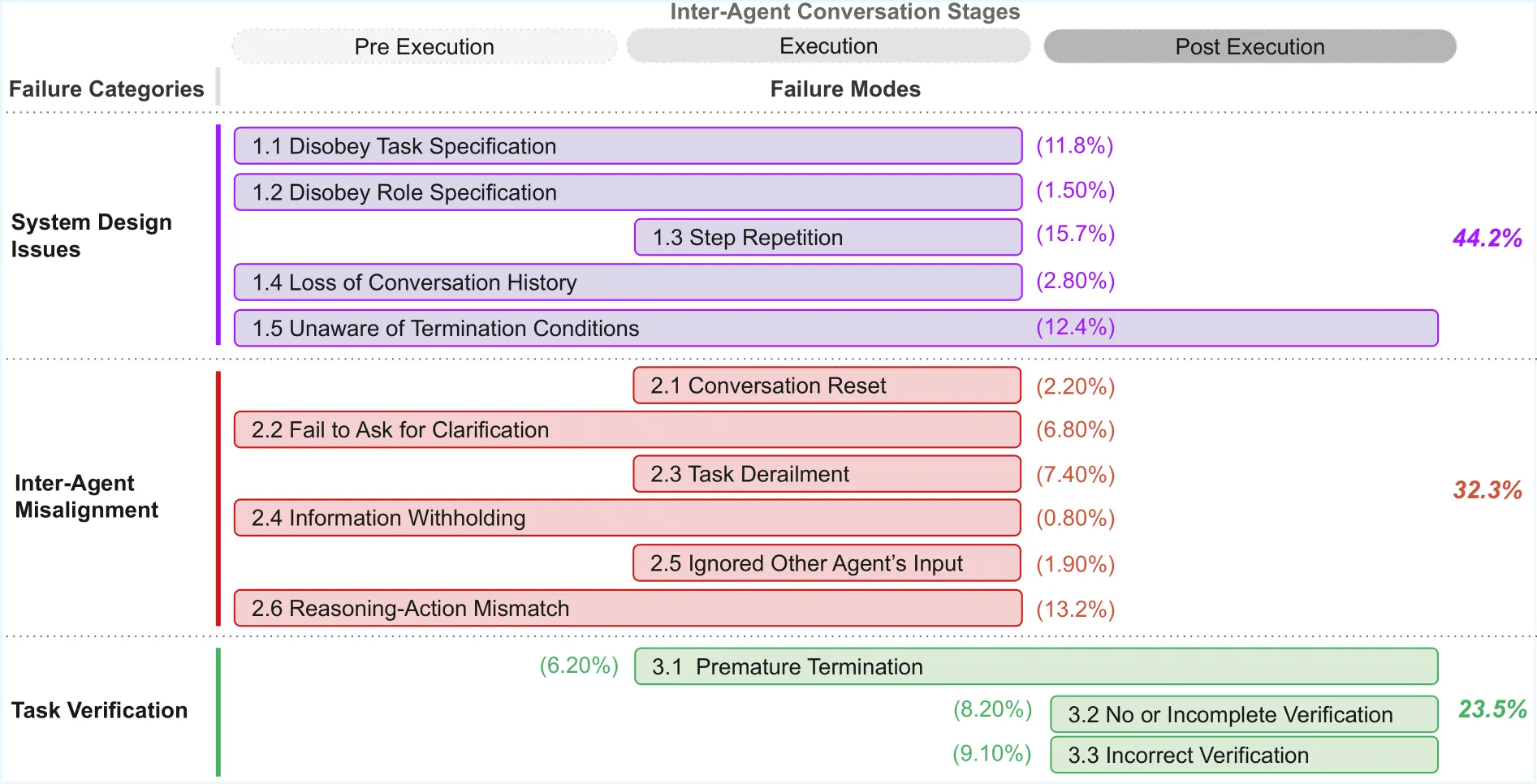

Automating Algorithm Discovery: A Case Study in Improving Multi-Agent System Design using MAST

This post is the seventh in our ADRS series. Designing effective multi-agent systems typically requires debugging workloads via execution logs and iteratively refining the agentic systems' behavior. In this blog, we replace hand-tuning with OpenEvolve to optimize the Multi-Agent System code directly. By leveraging MAST feedback, OpenEvolve continuously mutates the architecture, automatically converging toward a more reliable system, improving failure rates by 7x.

Automating Algorithm Discovery: A Case Study in Kernel Generation with Datadog BitsEvolve

This post is the sixth in our ADRS series. We feature exciting work from Datadog this week! We examine the problem of generating production-ready, optimized GPU code from an evolutionary search perspective. Through profile guidance and robust evaluation mechanisms, we show how BitsEvolve-generated code can outperform compiled models, achieving speedups of up to 1.6x with reasonable search costs.

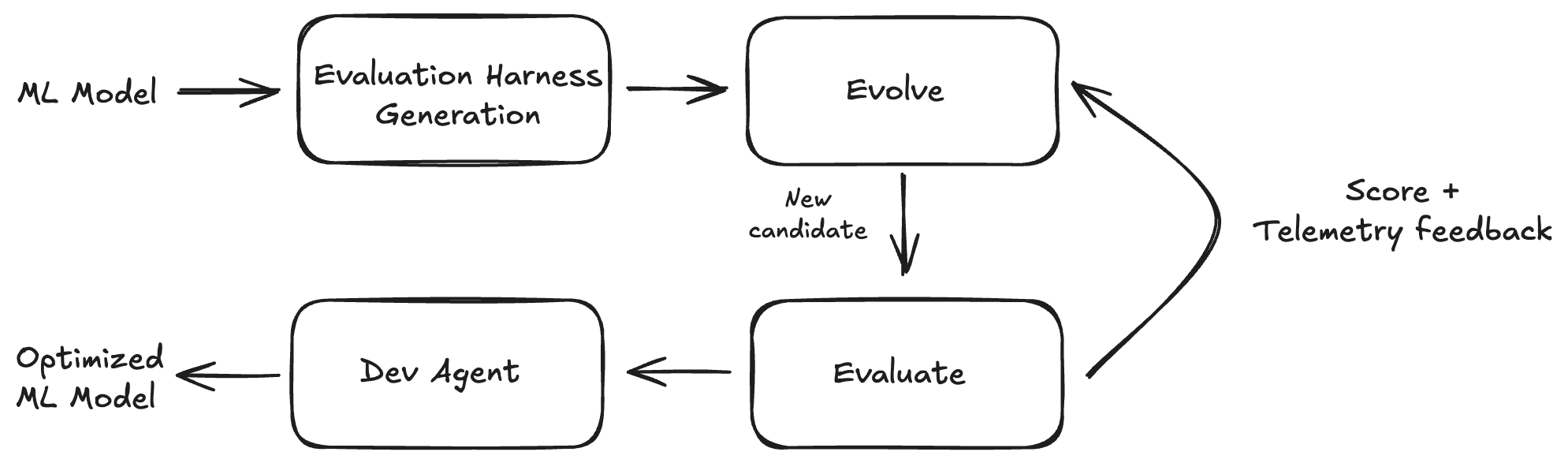

Autocomp: An ADRS Framework for Optimizing Tensor Accelerator Code

This post is the fifth in our ADRS series. We highlight Autocomp, the first LLM-driven code optimizer for low-resource tensor accelerators. Autocomp helps hardware designers extract the full performance of tensor accelerators, outperforming human expert kernel writers by up to 17x on AWS Trainium while being highly portable and easy to use.

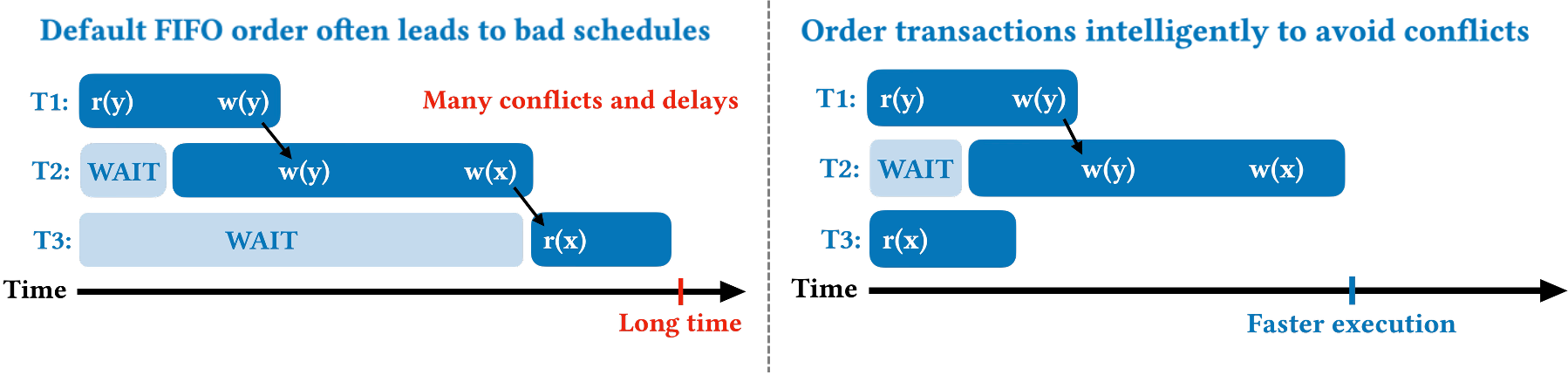

Automating Algorithm Discovery: A Case Study in Transaction Scheduling

This post is the fourth in our ADRS series. In this blog, we revisit a recent research problem from our VLDB '24 paper, Towards Optimal Transaction Scheduling, which minimizes contention for database transactional workloads. We show how we leverage an ADRS framework to discover an algorithm with 34% faster schedules. This case study shows how AI can be used to develop solutions for different problem settings that would otherwise require manual redesign.

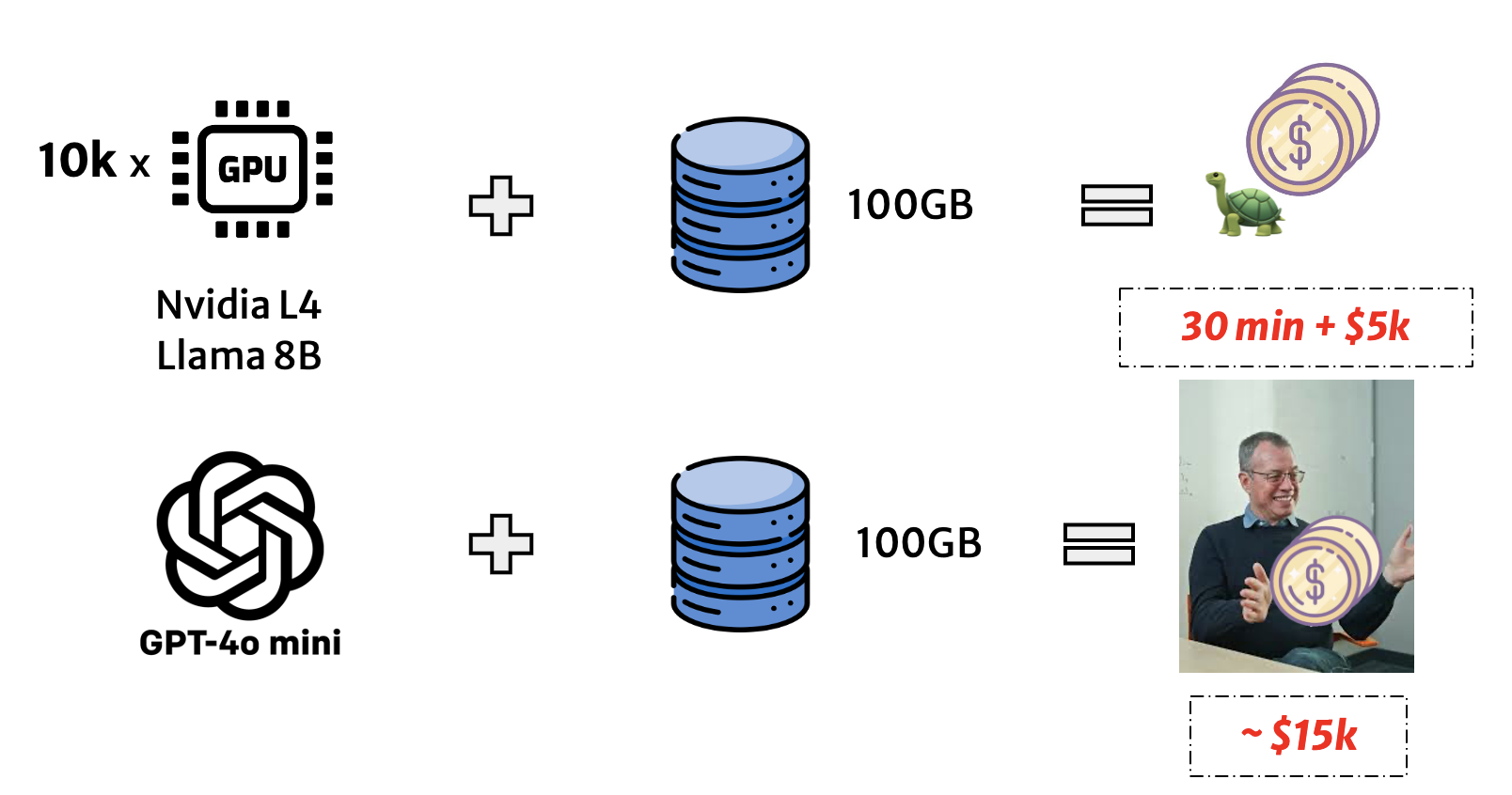

Automating Algorithm Discovery: A Case Study in Optimizing LLM Queries over Relational Workloads

This post is the third in our ADRS series, where we use AI to automatically discover better algorithms for real-world systems problems. In this blog, we revisit a recent research challenge from our MLSys'25 paper, Optimizing LLM Queries in Relational Data Analytics Workloads, which tackles the high cost and latency of executing LLM queries over relational data. We show how using OpenEvolve, ADRS autonomously discovered a 3x faster algorithm that achieves the same prefix reuse ratio as the published solution.

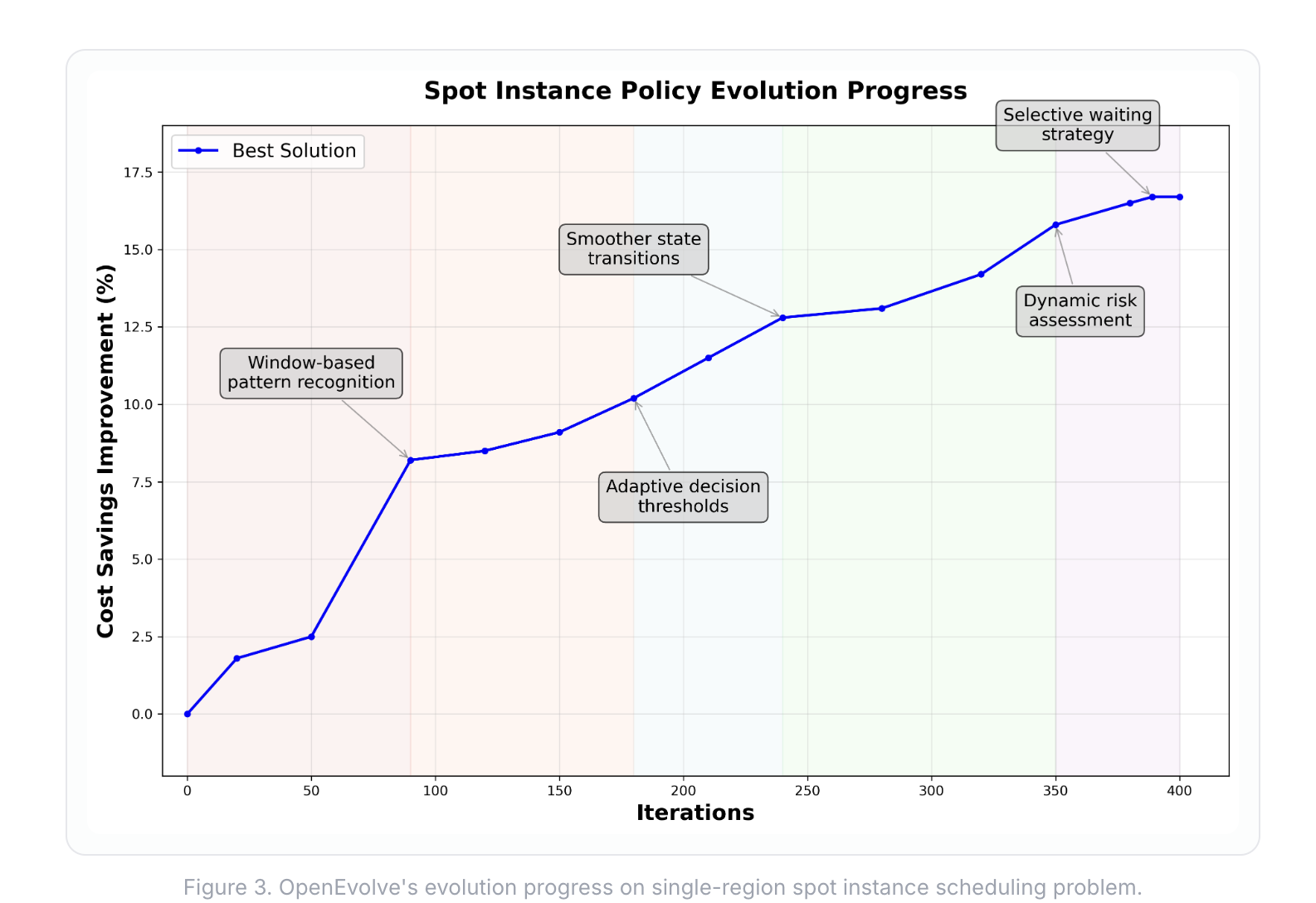

Automating Algorithm Discovery: A Case Study in Spot Instance Scheduling

This post is the second in our ADRS series, where we apply AI to optimize complex system problems. Here, we tackle spot instance scheduling, a classic cost-versus-reliability problem in the cloud. We demonstrate how OpenEvolve discovers novel algorithms that surpass the algorithm from an NSDI'24 Best Paper, achieving up to 16% and 48% cost savings in single and multi-region setups, respectively.

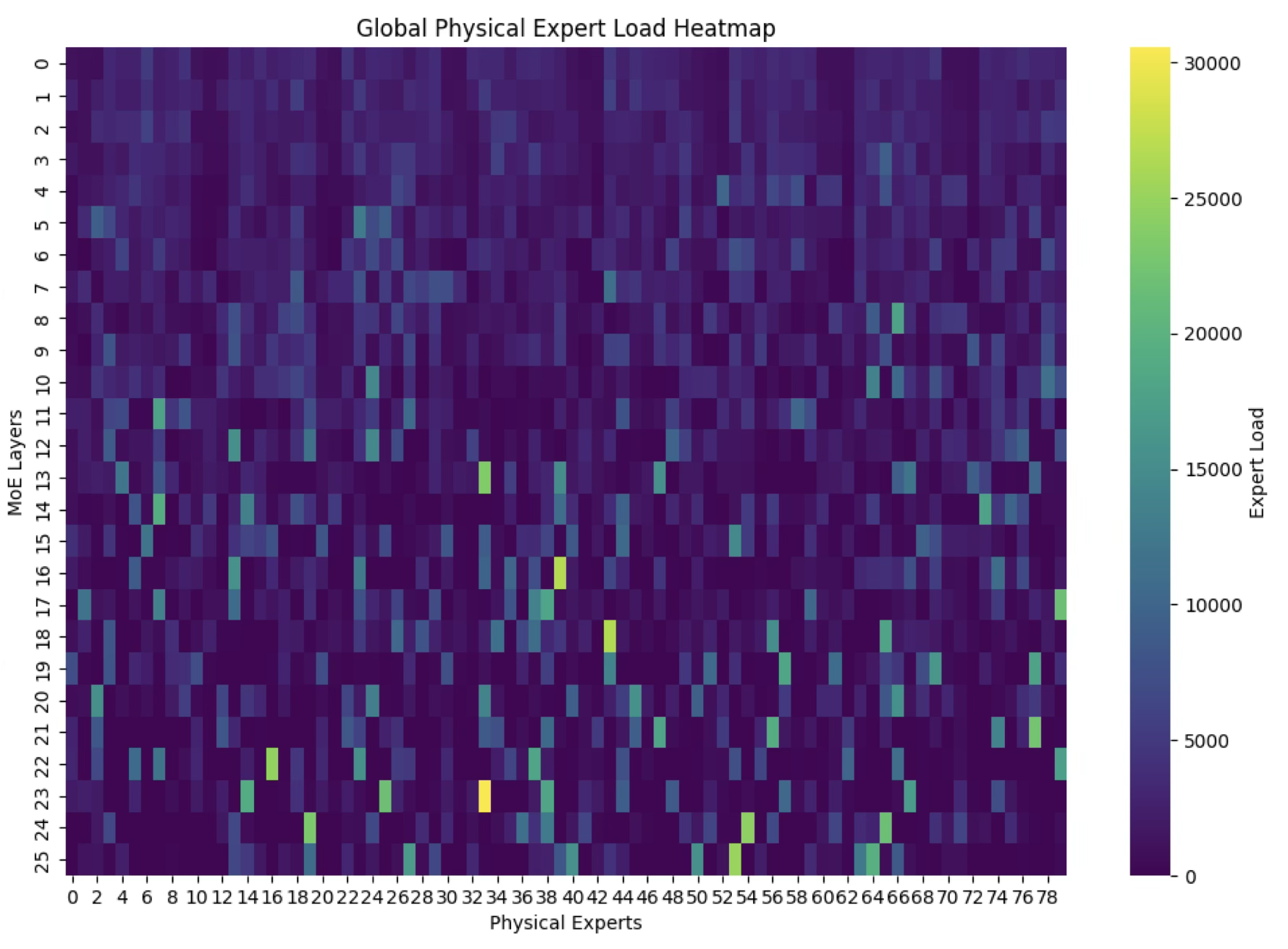

Automating Algorithm Discovery: A Case Study in MoE Load Balancing

This post is the first in a series of case studies in which we apply ADRS to optimize performance in various systems. We discuss the optimization of a key component in large language model (LLM) inference. Specifically, we demonstrate how OpenEvolve independently discovers and surpasses highly optimized algorithms engineered by human experts to achieve a 5.0x speedup.

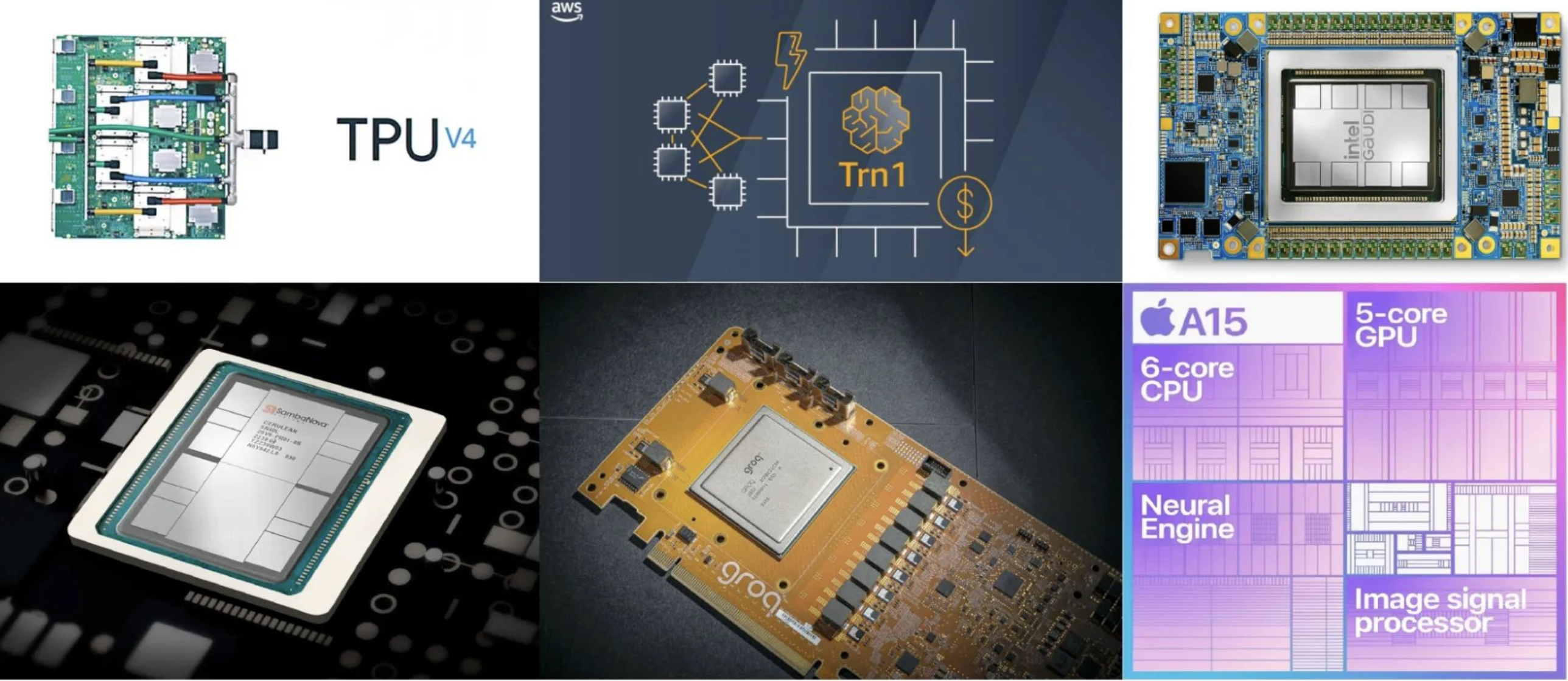

Barbarians at the Gate: How AI is Upending Systems Research

AI is no longer just tuning systems as a black box. It's now rewriting their core algorithms by treating the system as a white box and discovering solutions that can outperform human experts. This new approach, which we term AI-Driven Research for Systems (ADRS), can automate some of the most tedious parts of research.